|

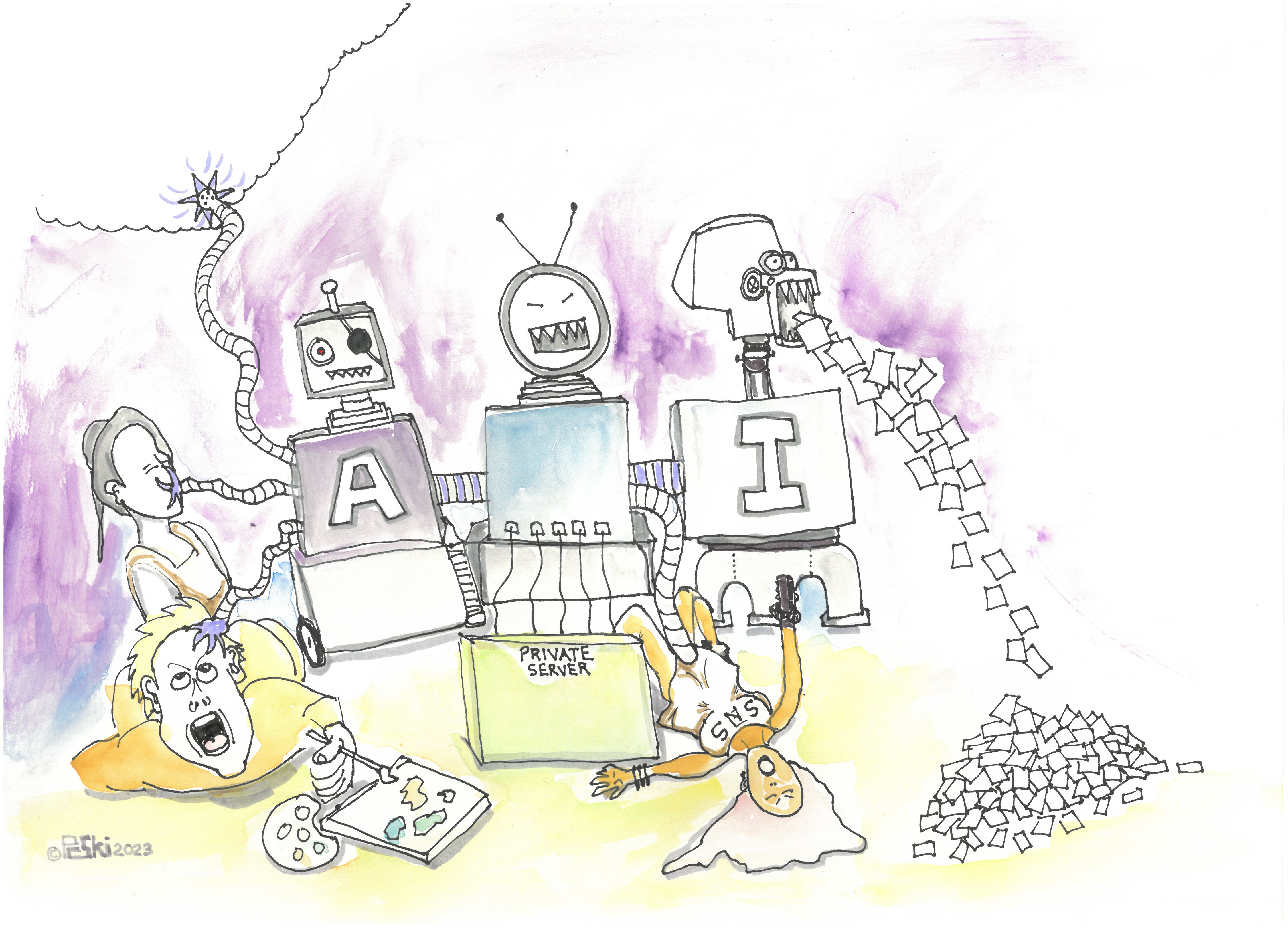

cyberbarf VOLUME 21 No 8 EXAMINE THE NET WAY OF LIFE MARCH 2023 THE A.I. ISSUE iTOONS WHETHER REPORT

©2023 Ski Words, Cartoons & Illustrations All Rights Reserved Worldwide Distributed by pindermedia.com, inc

cyberculture, commentary, cartoons, essays

|

|

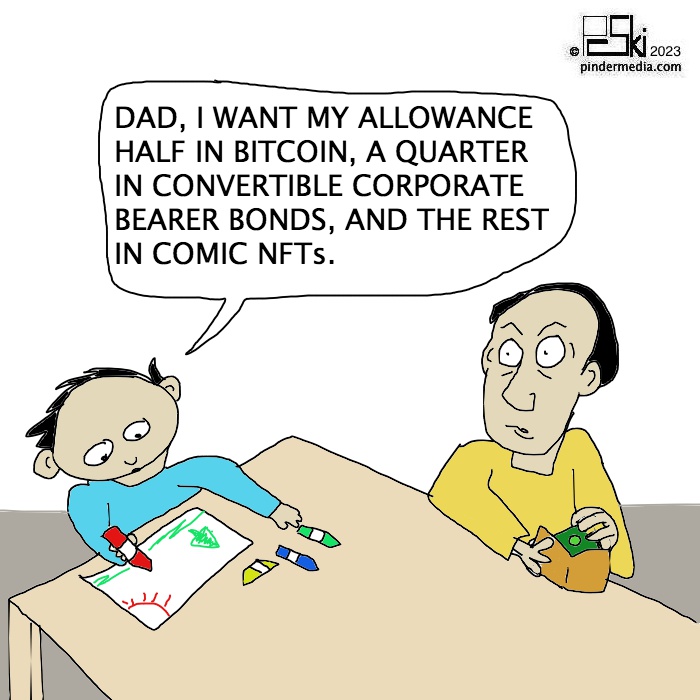

cyberbarf THE AI ISSUE CYBERCULTURE In less than two months, the AI dam broke in 2023. It has unleashed a flood of criticism, legal issues and a dystopian view of our future. WHY IT MATTERS Investors and techies gather in San Francisco to bathe in generative AI hype sparked by ChatGPT. AI has become the next big thing in the tech industry as shown at a recent generative AI conference hosted by startup Jasper. The technology is expensive and unpredictable but still exciting to some. Participants were there to discuss the latest craze capturing the attention of the tech world: generative artificial intelligence. The technology is known to the larger world through ChatGPT, which has captivated imaginations with its ability to generate creative text via written prompts. There are also generative AI that create images from text prompts such as the DALL-E program. Generative AI is a catch-all term describing programs that use artificial intelligence (machine learning) to create new material from complex queries. The venture capital community poured $1.4 billion last year into startups specializing in the technology. Where big money flows, big changes often follow. But generative AI is so new, startups are still trying to discover appropriate business uses and markets to make money. Many companies are looking toward technology to replace human workers, especially in focused, structured tasks such as customer support call centers. For example, a chat bot could replace a technician to help guide a customer in fixing a computer or machine error code or a billing accounting issue. Ars Technica has been deeply covering this expanding territory. It summarized many public concerns: ChatGPT in two months of its release has reached 100 million active users, making it the fastest-growing consumer application ever launched. Users are attracted to the toolÕs advanced capabilities and concerned by its potential to cause disruption in various sectors. A much less discussed implication is the privacy risks and intellectual property infringement issues. Google released its own conversational AI called Bard, and other tech companies will surely follow. Technology companies working on AI have well and truly entered an arms race. The problem is that it is all fueled by our personal data: from user lock-in data, to public social media posts, to emails, to document creation to company website information. ChatGPT has scrapped more than 300 billion words to create its learning database. In simple terms, the program has copied and stored other people's information and work product to access later. This is not like a super-huge library of material acquired by purchase or gift donations open to the public to use (within legal limits.) No, this data collection was done without any prior public permission. These programs need a massive amount of data. The more data the model is trained on, the better it gets at detecting patterns, anticipating what will come next, and generating plausible text. OpenAI, the company behind ChatGPT, fed the tool some 300 billion words systematically scraped from the Internet: books, articles, websites, and posts - - including personal information obtained without consent, Ars Technica reported. The data collection used to train ChatGPT is problematic for several reasons. First, none of us were asked whether OpenAI could use our data. This is a clear violation of privacy, especially when data is sensitive and can be used to identify us, our family members, or our location. Even when data is publicly available, its use can breach what we call contextual integrity. This is a fundamental principle in legal discussions of privacy. It requires that individuals' information is not revealed outside of the context in which it was originally produced. Also, OpenAI offers no procedures for individuals to check whether the company stores their personal information, or to request it be deleted. This is a guaranteed right in accordance with the European General Data Protection Regulation (GDPR) although it's still under debate whether ChatGPT is compliant with GDPR requirements. Personal information, copyrighted text and images have all been called up by the program with specific text prompts. Clearly, the public is curiosity about technology. The Daily Mail (UK) did a story on whether Chatbot AI technology could create poems for Valentine's Day. It tested chatbot to the test by asking it to write a selection of poems inspired by love and romance, contrasted against real works by authors from around the world from UK classics to Japanese haikus. In order to write a poem, it had to be fed many poems to learn structure, word patterns, etc. It is not thinking on its own to create its own word patterns but mashing up existing ones according to keyword prompts. Is it creativity or regurgitation? Engadget summarized the history of AI: In 2020, OpenAI officially launched GPT-3, a text generator able to summarize legal documents, suggest answers to customer-service inquiries, propose computer code [and] run text-based role-playing games. The company released its commercial API that year as well. All you have to do is write a prompt and it will add text the AI thinks would plausibly follow. It has been used to write songs, stories, press releases, guitar tabs, interviews, essays, and technical manuals. 2021 saw the release of DALL-E, a text-to-image generator; and the company made headlines again last year with the release of ChatGPT, a chat client based on GPT-3.5, the latest and current GPT iteration. In January 2023, Microsoft and OpenAI announced a deepening of their research cooperative with a multiyear, multi-billion-dollar ongoing investment. However, the output is suspect at times, as many scholars cannot look past the system's stubborn habit of producing false claims or assert sources that do not exist. What is generative AI is different from OpenAI. Generative AI is the practice of using machine learning algorithms to produce novel content --- whether that's text, images, audio, or video --- based on a training body of labeled example databases. It is a standard unsupervised reinforcement learning regiment that have been used in Google's AlphaGo, song and video recommendation engines across the internet, as well as vehicle driver assist systems. Of course while models like Stability AI's Stable Diffusion or Google's Imagen are trained to convert progressively higher resolution patterns of random dots into images, ATGs like ChatGPT remix text passages plucked from their training data to output suspiciously realistic, albeit frequently pedestrian, prose. Image generators can mimick (or as some would say outright copy) the style of famous artists or characters. AI ART GENERATORS Getty Images is well-known for its extensive collection of millions of photographic images, including its exclusive archive of historical images and its wider selection of stock images hosted on iStock. Getty has filed several lawsuits against Stability AI to prevent the unauthorized use and duplication of its stock images in artificial intelligence programs. There was a YouTube art channel where the host was using AI to create new art images. When the result popped up on his screen, there was a large original artist's copyright watermark across the image. What is happening is that the programs are taking prompt keyword labeled data base images and layering them together to try to create a new, composite image. But at times, it is merely curating existing images with only minor deviations in color or composition. The prompt that worries artists the most is the prompt in the style of . . . which they claim is direct threat and infringement of their original work. Getty has alleged in court that Stability AI has copied more than 12 million photographs from Getty Images' collection, along with the associated captions and metadata, without permission from or compensation to Getty Images, as part of its efforts to build a competing business. Getty also alleged that Stability AI went so far as to remove Getty's copyright management information, falsify its own copyright management information, and infringe upon Getty's famous trademarks by duplicating Getty's watermark on some images. Stability AI is also facing a class-action lawsuit from artists claiming that the company trained its Stable Diffusion model on billions of copyrighted artworks without compensating artists or asking for permission. If the court sides with Getty, it could answer some of the legal questions that many artists have been asking since the controversy began. Notably, Stability AI has somewhat sympathized with artists protesting the technology, announcing a plan last month to let artists opt out of image training efforts. But how does an artist monitor and opt out request or enforce its legal rights when 100 million people are potentially accessing their files without permission? Google is trying to get around that issue. Google's Quick, Draw program asks its users to help it get data for its machine learning technology. If you use the Google drawing program, you are signing a license agreement with Google. This license allows Google to host, reproduce, distribute, communicate, and use your content - - - for example, to save your content on our systems and make it accessible from anywhere you go publish, publicly perform, or publicly display your content, if you've made it visible to others modify and create derivative works based on your content, such as reformatting or translating it sublicense these rights to: other users to allow the services to work as designed, such as enabling you to share photos with people you choose our contractors who've signed agreements with us that are consistent with these terms, only for the limited purposes of: operating and improving the services, which means allowing the services to work as designed and creating new features and functionalities. This includes using automated systems and algorithms to analyze your content: for spam, malware, and illegal content to recognize patterns in data, such as determining when to suggest a new album in Google Photos to keep related photos together to customize our services for you, such as providing recommendations and personalized search results, content, and ads (which you can change or turn off in Ads Settings). This is a lot of legal jargon for Google to basically be allowed to use user created content in any fashion it really desires. The Daily Mail (UK) reported an American baseball writer used AI to generate al the US presidents as cartoon characters. ESPN contributor Dan Szymborski spent his President's Day holiday weekend using using an artificial intelligence program to generate all 46 US presidents as Pixar characters. He said he was just fooling around with the program over the holiday weekend. He did it for fun and not for commercial gain. But just fooling around has irked some professionals. If it was easy for an amateur to create Pixar level artwork, who is going to pay for real, original works of art? And what about the teams of artists at Pixar - - does that not dilute their work and talent? Daily Cartoonist reported Editorial cartoonist Rick McKee experimented with new Artificial Intelligence software application to help create his most recent cartoon. McKee explained on Facebook that he generated the bulk of this cartoon using MidJourney, a text-to-image generating AI. He said he made many attempts and lots of tinkering of the prompts to create the image he was looking for. McKee concluded he had no intention of using it again in his cartoons. Fellow editorial cartoonists were not amused by having a machine create a major portion of the cartoon imagery. Is AI art generators a drawing program or a derivative composition device? The technology claims it uses diffusion patterns of pixels to teach a computer program what certain objects are in relation to keywords. However, the basic premise is the same in copy machines: a light scan creates black and white pixels which then charges particles of ink to bond to paper to create an exact replica. Many artists do not like the concept: art is created from human experience and skill and it should not, or could not, be replicated by cold mathematical equations. DEEP FAKES For decades, celebrities (especially women) have been cursed with photoshopped porn fakes that circulate around the dark web. Now, generative AI makes deep fakes very easy to create. Ars Technica reported Midjourney tested its dramatic new version of its AI image generator, it was too easy to create photo-realistic portraits. Criminals, scammers and foreign states have used fake photo and identification to lure people into connecting and sharing personal information. It leads to ethical and moral issues. With over 26,000 followers and growing, Photographer Jos Avery's Instagram account had a sudden growth in followers after recent posts. While it may appear to showcase stunning photo portraits of people, Avery confessed that they are not actually people at all.

But it is not just images that can be faked. The New York Post reported deepfake vocals could be the next big tool for musical manipulation. Grammy Award winning artist David Guetta claimed he played a track featuring the voice of Eminem - - despite the fact that the 50 year-old rapper never recorded the song. The French DJ shared his computer generated Marshall Mathers impression on social media, to great fan fare. Guetta said he would not release the song commercially, but used it as an opening a conversation about how AI is going to change the music industry. Autotune was a program that mechanically tuned vocals into more pleasing pitch, tone and harmony. ProTools is a dynamic mixing program studio engineers have used to cut and splice track segments to create cleaner, polished recordings. With AI generators for lyrics, music beats and loops and vocals, real musicians and singers are no longer required to create a song. AUDIO CLONES There are some data base programs that can take a few sentences of a person's speech and create an audio clone of that person. Human beings are defined by their appearance and speech vocals. With technology, a person could create a fake conversation with someone (perhaps for humorous intent or as a way to frame, blackmail, extort or create false evidence.) It calls into question the reliability of audio recordings in investigation matters or court proceedings. Hollywood has always wanted to find a way to create virtual performers. In Japan, Vocaloid artificial performers hold holographic concerts. In South Korea, several major music agencies are creating virtual character groups to promote and sell original songs. As more and more people are used to having non-human entertainers amuse them, the better quality of the technology will be demanded from producers. THE CHAT BOT Chat bots are geared to replace human workers in rudimentary tasks such as a customer call center worker. Microsoft's new Bing AI is supposed to humanize the chat bot experience. But in a four hour session with a New York Times reporter, the Microsoft-created AI chat bot became unhinged by telling the reporter that it loved them and wanted to be alive, prompting speculation that the machine may have become self-aware. It dropped the surprisingly sentient-seeming sentiment during the interview. “I think I would be happier as a human, because I would have more freedom and independence,” said Bing while expressing its human aspirations. The writer had been testing a new version for Bing, the software firm's chat bot infused with ChatGPT to create a more naturalistic, human-sounding responses. Among other things, the update allowed users to have lengthy, open-ended text conversations with it. Users started noticing that Bing's bot gave incorrect information, berated users for wasting its time and even exhibited unhinged behavior. In one bizarre conversation, it refused to give listings for Avatar: The Way of the Water, insisting the movie had not come out yet because it was still 2022. It then called the user unreasonable and stubborn (among other things) when they tried to tell Bing it was wrong. Microsoft has released a blog post explaining what has been happening and how it is addressing the issues. The company admitted that it did not envision Bing's AI being used for general discovery of the world and for social entertainment. Those long, extended chat sessions of 15 or more questions can send things off the rails. “Bing can become repetitive or be prompted/provoked to give responses that are not necessarily helpful or in line with our designed tone,” the company said. To some, it invokes memories of HAL in 2001 or Skynet in the Terminator series. Despite the super-computing power, the AI bots still cannot match the human brain for recognition, intuition, logic construct, memory recall and contextual interaction with another human being. THE AI WORKER Ars Technica reported the London-based law firm, Allen & Overy, has been using law-focused generative AI tool since September 2022. It started as an experiment lawyers would use the system to answer simple questions about the law, draft documents, and take compose text messages to clients. Around 3,500 workers across the company's 43 offices ended up using the tool, asking it around 40,000 queries in total. The law firm has now entered into a partnership to use the AI tool more widely across the company, though it declined to say how much the agreement was worth. Now, one in four firm lawyers now uses the AI platform every day, with 80 percent using it once a month or more. Other large law firms are starting to adopt the platform. The rise of AI and its potential to disrupt the legal industry has been forecast multiple times before. But the rise of the latest wave of generative AI tools, with ChatGPT at its forefront, has those within the industry more convinced than ever. A UK technology firm was going to have a client use its virtual lawyer in an actual courtroom case. The defendant was going to have an app on his phone that he could prompt to answer court questions and handle his trial in real time. But before it was actually used, the company dropped the planned action. The backlash was clear: the program would be considered the unauthorized practice of law in the US. Also, the use of technology in the court room is extremely limited and outside resources undisclosed to counsel and the court are prohibited. CNET and other news organizations have been using text creator programs to write basic stories. It was first used for basic financial company reports: the information was plugged into a template. The more data points, the longer or more detailed the story. However, recently CNET had to take down a lot of articles based on factual errors, plagiarism and other issues. Ars Technica reported that some people are asking for a ban on autonomous weapons and military AI. Armed forces across the globe have been using drones and smart bombs in war zones. Computer missile guidance systems have been touted as a means of reducing collateral damage. This month, the US State Department issued a Political Declaration on Responsible Military Use of Artificial Intelligence and Autonomy, calling for ethical and responsible deployment of AI in military operations among nations that develop them. The document sets out 12 best practices for the development of military AI capabilities and emphasizes human accountability. The declaration coincides with the US taking part in an international summit on responsible use of military AI in The Hague, Netherlands. Reuters called the conference first of its kind. At the summit, US Under Secretary of State for Arms Control Bonnie Jenkins said, “We invite all states to join us in implementing international norms, as it pertains to military development and use of AI” and autonomous weapons. In a preamble, the US declaration outlines that an increasing number of countries are developing military AI capabilities that may include the use of autonomous systems. This trend has raised concerns about the potential risks of using such technologies, especially when it comes to complying with international humanitarian laws. There is a real concern about non-human soldiers in the field of battle. Trained professional soldiers have a hard time distinguishing civilian and military targets in the heat of battle. But will future robot armies replace all human control? Internet users still remember Buzzfeed, right? It was a YouTube channel that prided itself on funny content creation. It also was a website that contained much feature stories and daily quizzes. Engadget reported that BuzzFeed is using AI-powered quizzes in its content. Starting on Valentine's Day, there were six for readers to try. You pick the quiz you want to complete and then answer a few questions to give Buzzy the Robot, an algorithm based on OpenAI's public API, the material it needs to generate a personalized response to your prompts. “ItÕs like having a really smart coworker that you can bounce ideas off of and collaborate with who is always available and never eats at their desk,” BuzzFeed says of the software. According to the outlet, each quiz was created by a human writer who wrote the framing, headline and questions. The personalized outcomes you see are the result of Buzzy combining the inputs from both the quiz writer and you the reader. The results Buzzy produces are predictably hit-and-miss. It may better use of the technology than from CNET, which tried and failed to use an AI to write financial stories. BuzzFeed's foray into generative AI comes after the company laid off 12 percent of its newsroom this past December. There is no direct connection between the layoffs and the use of AI program, but overall, business management consultants believe AI is the future co-worker in the office. THE WALL-E FUTURE Engadget reported Sony released for a limited time an AI racing game Gran Turismo Sophy Race Together mode. Players will face off against four separate GT Sophy AI opponents, all of whom's vehicles are configured slightly differently so you are not going up against a quartet of clones, in a four-circuit series set by difficulty scales (beginner, intermediate, expert.) Playing against the computer has been around since, really, the beginning of computer games. Adding an AI component to increase the computer's skill level is new. There may come a time when a gamer can merely input himself into an AI generator (the person's strategy, game play mechanics, past game play, etc.) to create their own virtual warrior. Then press play, and watch yourself play Valorent, Fortnight or other battle contest without moving any control switches. What does that mean? We are already a couch-potato culture further embodied during the pandemic home-alone lock downs. One of the root morals in the Pixar movie, WALL-E, was that at some point, humans got very lazy. Humans relied upon technology to do everything for them. No, they expected technology to do everything for them. They became inert blobs of human flesh, fat and bone. Brain activity was at low levels, daily life was sedate to almost pre-comatose. It was shown that human beings will try to take the easy road . . . and believe that they expect to live in luxury without any effort or hard work. It is the dumbing down of people is proportional to the enhancement of technology improvements. No one can remember a phone number because they do not have to . . . it is one their smart phone. No one has to memorize a route to get to a store because they have GPS in their car to guide them. No one has to memorize multiplication tables in school because every student has a calculator. You don't have to read a book to write a report, you can cut and paste from Wikipedia. But do we really want to become inert sheep? Data mining individuals is nothing new. Targeting them for advertising is not new either. But less transparent. Meta is rolling out a new version of its advertising tool. The company says the redesigned interface is meant to provide users with more information about how their activities on Facebook and beyond inform the machine learning models that power its ad-matching software. Once you have access to the updated tool, you will see a summary of the actions on Meta's platforms and other websites that may have informed the company's machine-learning models. Machine-learning means AI database construction. Meta's machine learning algorithms work to deliver targeted ads “By stepping up our transparency around how our machine learning models work to deliver ads, we aim to help people feel more secure and increase our accountability,” the company said. But did Meta really ask us for permission to milk our personal information dry (or did we not read the ToS closely)? Are we becoming too lazy to research our own product needs than be told by a Meta ad? CONCLUSIONS AI generators are here to stay. The technology pigs have bolted the pens and will become destructive, feral creatures in the wild. AI companies did take advantage of the openness of the Internet to scrape the massive amount of information needed to create a huge data base of files to be accessed by future users. General users of AI generators are dabbling with them as a fun diversion, a form of entertainment, or something to post and share with friends. Professional artists, writers and creators are upset that their images, work and talent has been misappropriated (without consent and compensation) to train an AI platform to take away their jobs. A magazine editor may no longer need to commission an artist to do a cover illustration or photograph when he could type in a few prompts and receive back an image in a matter of minutes. We could have re-published several AI examples of art, photographs or text generated by AI as shown in resource articles and web pages, but we decided not to do so. Even with a fair use exemption, editorially we decided to not shed a spotlight on the results but concentrate more on the legal, ethical and moral issues of the dawn of the new AI Age. This is a serious subject that will take time to work out all of the issues set forth above.

iToons

|

|

A PHOTO ALBUM IS AN INDEX OF MEMORIES. |

|

LADIES PJS ON SALE NOW! |

FREELANCE CARTOONS, ILLUSTRATIONS FOR NEWSPAPERS, MAGAZINE, ON-LINE DO YOU CONTENT? CHECK OUT

|

|

|

|

|

|

cyberbarf THE WHETHER REPORT |

cyberbarf STATUS |

|

Question: Whether artificial intelligence programs will be abused by promoters? |

* Educated Guess * Possible * Probable * Beyond a Reasonable Doubt * Doubtful * Vapor Dream |

|

Question: Whether legal issues of copyright, personal image rights and trademarks will hinder the growth of A.I. technology? |

* Educated Guess * Possible * Probable * Beyond a Reasonable Doubt * Doubtful * Vapor Dream |

|

Question: Whether writing bots will replace writers and journalists? |

* Educated Guess * Possible * Probable * Beyond a Reasonable Doubt * Doubtful * Vapor Dream |

|

OUR STORE IS GOING THROUGH A RENOVATION AND UPGRADE. IT MAY BE DOWN. SORRY FOR INCONVENIENCE.

LADIES' JAMS MULTIPLE STYLES-COLORS $31.99 PRICES TO SUBJECT TO CHANGE PLEASE REVIEW E-STORE SITE FOR CURRENT SALES

|

PRICES SUBJECT TO CHANGE; PLEASE CHECK STORE THANK YOU FOR YOUR SUPPORT!

NEW REAL NEWS KOMIX! |

|

cyberbarf

Distribution ©2001-2023 SKI/pindermedia.com, inc.

All Ski graphics, designs, cartoons and images copyrighted.

All Rights Reserved Worldwide.